(The Answer Will Blow Your Mind)

Where Does the Internet Actually Live?

(The Answer Will Blow Your Mind)

You’re scrolling Instagram at midnight, a video buffers in half a second, and you don’t think twice about it. But have you ever stopped and genuinely asked yourself — where did that come from? Like, physically. Where does the internet actually live?

I hadn’t either, not really, until I went down a rabbit hole that ended with me staring at a 1.5-million-square-foot building in Dallas, Texas that most people have never heard of — and realizing the whole thing felt like a science fiction movie that nobody told us was real.

Here’s what blew my mind: the internet isn’t this magical cloud floating somewhere above us. It’s buildings. Massive, heavily-guarded, temperature-controlled buildings full of humming machines. And the biggest companies in the world — the ones running the apps you use every single day — they’re basically neighbors inside these places, passing data back and forth without ever touching the chaotic, slow, unpredictable internet you and I use.

This post is going to walk you through all of it. From what a data center actually is, to how the internet bypasses itself, to the wild security layers that would make a spy movie blush. Let’s get into it.

The Internet Has a Home — And It’s Not Where You Think

Ask most people where the internet lives and they’ll wave vaguely at the sky and say “the cloud.” Which is technically accurate in the loosest possible sense, but it’s kind of like saying your food comes from “the store.” True, but it completely skips the interesting part.

The internet lives in data centers. Physical buildings, usually enormous, packed floor-to-ceiling with servers, networking gear, and miles of fiber optic cable. These are the places where your emails sit when you’re not reading them, where your Netflix movie is stored before it streams to you, and where AI models think their computationally expensive thoughts.

One of the most important of these buildings in North America is the Dallas Infomart. It’s a retro futuristic structure that was originally built in the 1980s as a sort of technology shopping mall — imagine IBM having a showroom right next to Kodak — but that dream didn’t really pan out. What it ended up becoming instead was something far more interesting: a building that happened to sit directly on top of a massive convergence of fiber optic cables.

Location, location, location. It’s not just a real estate cliché. It’s literally why the internet works the way it does.

You see, in the US, long-distance fiber optic cables were often laid along existing infrastructure — railway lines and major highways — because those rights-of-way were already cleared. The Infomart is sitting at a critical intersection of these fiber highways. Think of it less like a building and more like Grand Central Station for data.

And then a company called Equinix bought it in 2018 and connected it via a massive underground fiber link to their brand new facility right across the street. Suddenly you had two of the most connected buildings on Earth, talking to each other at terabit speeds, right in downtown Dallas.

Getting Inside One of These Places Is Harder Than You’d Think

I’ll be honest — I’d always assumed data centers were just big rooms with servers you could sort of wander into if you knew someone. I was very, very wrong.

The security inside a modern colocation data center is genuinely intense. We’re talking multiple layers of authentication before you ever see a single server rack. Here’s roughly what the experience looks like:

- Reception checkpoint: You show government-issued ID and get logged in the system. No ID, no entry — full stop.

- The man trap: This is the part that sounds like something out of a heist movie. It’s a small enclosed area — like an airlock — where both doors cannot be open at the same time. You badge in on one side, the door locks behind you, and only then can you authenticate on the other side to proceed.

- Floor access: You only get access to the specific floor where your equipment lives. Not the whole building. Your floor.

- Cage access: And even on your floor, you only get into your cage — a locked enclosure housing your servers — with another badge tap and fingerprint scan.

- Constant escort: Even after all of this, if you’re a visitor, you cannot walk anywhere alone. You’re accompanied at every moment.

Why so much security? Because the equipment inside these buildings represents hundreds of billions of dollars in infrastructure, and a large portion of the global internet runs through them. One bad actor with physical access could cause catastrophic damage.

There’s a social engineering attack called “tailgating” — where someone simply follows an authorized person through a door before it closes. The man trap design specifically defeats this, because even if you squeeze in behind someone, you’re now stuck in a sealed chamber until you can authenticate independently. It’s a deceptively simple and incredibly effective physical security measure.

One detail I found especially clever: many of these data centers use blue lighting throughout. It looks cool, sure, but there’s actually a functional reason. Blue light makes it much harder to read the labels on equipment in the cages. If you were a competitor walking past someone’s rack, you’d struggle to identify exactly what hardware they’re running. It’s defense through ambiguity — yet another subtle layer on top of everything else.

This is a concept called defense in depth: don’t rely on any single security measure; stack multiple layers so that even if one fails, the others hold. It applies to digital security just as much as physical, and it’s genuinely the right way to think about protecting anything valuable.

Inside the Cages: What Are Companies Actually Keeping in There?

Once you’re past all the security checkpoints, what you see is row after row of locked steel mesh enclosures — the cages. Each one belongs to a specific customer, and no one else can get in. Not even the data center staff will open your cage without explicit authorization and a very good reason.

These cages aren’t uniform, either. Some have open tops — theoretically you could climb in Tom Cruise-style, though the 24/7 camera coverage means you’d be tackled within about five seconds. Others have fully enclosed tops, solid walls, and additional mesh reinforcement underneath. The most sensitive customers — the ones you’ve never heard of because they work very hard at that — have enclosures you can’t even see into.

Inside these cages, you’ll typically find:

- Server racks: The physical machines doing the computing — web servers, database servers, application servers.

- Networking gear: Switches, routers, and firewalls managing how data flows in and out.

- GPUs and accelerator cards: Especially common now with AI workloads eating enormous amounts of compute.

- Storage arrays: Where the actual data lives — your files, your photos, your messages.

What you won’t find is any visible indication of whose equipment this is. No logos. No branding. The blue lights help obscure hardware labels. This anonymity is intentional. If your competitor knows you’re running a particular type of load balancer, that’s a data point they shouldn’t have.

Keeping All This Running: Power and Cooling at Scale

Here’s a problem you might not have thought about: a single rack of modern GPU hardware can consume as much electricity as an entire house. Now imagine thousands of racks in a single building. The power and cooling requirements are staggering.

How They Keep It Cool

Traditional data centers used raised floors — elevated platforms with cool air pumped up from below, since hot air naturally rises. But modern facilities, especially those handling dense AI workloads, have moved to a different approach: slab floors (flat concrete) with side-mounted air handlers.

The reason for the switch is simple: slab floors can hold far more weight than raised floors. Modern server racks are incredibly dense and heavy, and a raised floor would struggle under that load.

The cooling system itself works a bit like a gaming PC’s liquid cooling loop — just at a scale that would make your head spin:

- Hot air rises to the top of the facility

- It’s captured and pushed through air handlers on the roof

- On the roof, massive self-contained cooling units — each one airlifted in as a single unit — act as giant radiators

- Chilled water circulates through the system, absorbing heat and releasing it outside

- Buffer water tanks provide emergency cooling capacity if something in the system fails

What Happens When the Power Goes Out?

The internet can’t exactly afford to take a nap because the local utility company had a bad day. So these facilities maintain diesel generator farms that can power the entire operation for up to 36 hours at full load. That’s not a typo — 36 hours of fully independent operation, waiting for the grid to come back or for fuel to be resupplied.

The Dallas Infomart and its neighboring facility together host over 140 networks peering at terabit speeds, 8,700+ strands of fiber, and enough generator capacity to run independently for more than a day and a half. It’s not an exaggeration to call it one of the most critical buildings on Earth.

Beginner’s Guide: What Is “The Internet,” Really?

Alright, let’s pause for a second because I want to make sure we’re all on the same page. Some of this gets technical fast, so here’s a quick grounding.

The Internet Is a Network of Networks

The internet isn’t owned by any single company or government. It’s a massive interconnected web of networks — AT&T’s network, Verizon’s network, Comcast’s network, plus thousands of smaller ones — all agreeing to exchange traffic with each other. This exchange happens at Internet Exchange Points (IXPs).

An IXP is basically a physical location where these networks meet and hand off data to each other. The Infomart is one of the most important IXPs in the southwestern United States.

What’s a “Packet”?

When you load a webpage, your request doesn’t travel as one big block of data. It gets broken into small chunks called packets, each one finding its own path across the internet through a series of routers, until they all arrive at the destination and get reassembled. This is why sometimes parts of a page load before others.

What Is Latency and Why Does It Matter?

Latency is the time it takes for a packet to travel from point A to point B and back. It’s measured in milliseconds. For most web browsing, latency under 100ms feels instant. For real-time applications — gaming, video calls, stock trading — even 30ms of extra latency can cause problems.

This is exactly why businesses pay serious money to connect directly inside data centers, rather than routing everything through the public internet with its unpredictable hops and delays.

| Connection Type | Typical Latency | Reliability | Use Case |

|---|---|---|---|

| Public Internet | 10–200ms (variable) | Moderate | Regular consumer usage |

| Dedicated Cross-Connect | <5ms (stable) | Very High | Business-critical workloads |

| Software-Defined Fabric | <5ms (stable) | Very High | Multi-cloud enterprise setups |

| Direct Cloud Connection | 1–5ms (stable) | Highest | Financial, AI, real-time apps |

How the Internet Bypasses Itself — The Magic of Cross-Connects and Fabric

“The biggest companies in the world don’t use the internet like you and I do. They’re neighbors — bypassing the chaos entirely.”

Here’s the part that genuinely fascinated me. When you access AWS or Azure from home, your request travels across the public internet — hopping through dozens of routers, potentially spanning thousands of miles, bouncing through congested exchange points. It mostly works fine, but it’s not exactly elegant.

Now imagine you’re a large enterprise. You’ve got thousands of employees, critical databases, customer-facing applications, and you’re running a hybrid cloud setup — some stuff in your own servers, some in AWS, some in Azure. You cannot afford that public internet flakiness. You need direct connections.

What’s a Cross-Connect?

A cross-connect is literally a physical cable that runs from your cage to another customer’s cage in the same data center. Want a direct connection to AWS? Order a cross-connect. They run a cable from AWS’s equipment to yours, and now you have a dedicated, private, low-latency link to their infrastructure — no public internet involved.

The catch: these are expensive, and they take time to provision. We’re talking weeks or months and thousands of dollars. And if you need connections to AWS, Azure, GCP, AT&T, and Cloudflare separately? That’s five cross-connects, five bills, five wait times.

Enter: Software-Defined Fabric

This is where it gets genuinely clever. Equinix built something called Equinix Fabric — essentially a software-defined network that sits inside their data centers and lets you make virtual connections instantly, instead of waiting for someone to run a physical cable.

Here’s how it works in practice:

- You order one physical port — let’s say 10 Gbps — into the Fabric network

- Through a web portal, you can then split that bandwidth however you like: 2 Gbps to AWS, 2 Gbps to Azure, 1 Gbps to GCP, 2 Gbps to your ISP for internet traffic, 3 Gbps in reserve

- You’re clicking through a web interface, not running cable

- Changes take seconds, not months

- Over 3,000 companies are available on this network, including every major cloud provider

And here’s the kicker: because Equinix has data centers across dozens of cities globally, once you’re on their network, you’re traveling on their private infrastructure between locations — not the public internet. You’ve essentially stepped off the highway and onto a private road with no traffic.

A financial services firm processing stock trades needs sub-millisecond connections to cloud providers, market data feeds, and their own trading servers. Instead of routing through the public internet — with its unpredictable delays — they connect through a facility like this. Every millisecond saved on a trade can be worth real money. This is why proximity to internet exchange points is genuinely valuable real estate for certain businesses.

Pro Tips: What IT Pros and Engineers Know About the Internet That Most People Don’t

If you work in tech — or you’re trying to build something that depends on the internet being fast and reliable — here are the things that most beginners take a while to figure out.

- Colocation vs. the cloud isn’t always a price question. Some workloads are better in a colo facility than in public cloud, especially high-bandwidth ones. Egress fees from AWS and Azure add up fast. Running your own servers in a colo can be cheaper at scale.

- Carrier neutrality matters more than you think. Avoid data centers that are tied to a single ISP. Carrier-neutral facilities give you options, competitive pricing, and redundancy if one provider has an outage.

- Physical location is a latency variable. Hosting your servers in a data center that’s geographically close to your users — and well-connected to CDN providers — will always beat throwing more hardware at the problem.

- Defense in depth applies physically, not just digitally. The blue lights, the man traps, the biometric authentication — none of these alone would stop a sophisticated attacker. Together they create enough friction that most threats don’t get through.

- Power redundancy should be non-negotiable. If you’re evaluating a colo facility, ask about their UPS (uninterruptible power supply) setup, their generator capacity, and how long they can run on backup power. Any serious facility should have an answer immediately.

- Dark fiber is a strategic asset. “Dark fiber” — unused fiber optic cable already in the ground — can be leased directly by large companies wanting private, high-bandwidth connections. If your data volume is enormous, this can be more cost-effective than leasing connectivity from a carrier.

Common Mistakes People Make When Thinking About the Internet

This isn’t just theoretical — these misconceptions lead to real problems in how businesses and individuals approach their internet infrastructure.

Mistake #1: Assuming “Cloud” Means Reliable

The major cloud providers — AWS, Azure, GCP — have all had notable outages that took down huge chunks of the internet with them. Relying on a single cloud provider without redundancy is a risk that many smaller companies don’t fully reckon with until it’s too late.

Mistake #2: Thinking Bandwidth Equals Speed

More megabits doesn’t fix latency. A 1 Gbps connection with 200ms of latency will feel slower for interactive applications than a 100 Mbps connection with 5ms latency. These are fundamentally different problems and require different solutions.

Mistake #3: Ignoring Physical Security

In the era of cybersecurity headlines, physical security often gets overlooked. But someone with physical access to your servers can bypass virtually every software security measure you’ve put in place. The data centers above take physical security seriously precisely because the professionals inside them understand this.

Mistake #4: Underestimating the “Last Mile” Problem

All this incredible infrastructure in the data center doesn’t matter much if the connection between your user’s device and the nearest network node is slow or congested. This “last mile” — the connection from the local ISP node to the customer’s home or office — is often the weakest link in the chain, and it’s the part that gets the least attention.

Mistake #5: Treating the Internet as Permanent Infrastructure

The internet we have today looks very different from the internet of 20 years ago, and it’ll look very different 20 years from now. The buildings, the fiber routes, the protocols — all of it is constantly evolving. The Infomart was almost forgotten as a failed shopping mall before becoming one of the most critical internet hubs in the Southwest. Infrastructure has a funny way of finding its highest use.

There’s something genuinely humbling about standing in a data center and realizing that when your friend on the other side of the world sends you a message, it’s physically processed in a building like this one. Someone designed these systems. Someone ran the fiber. Someone is on call right now making sure the cooling units don’t fail. The internet is an abstraction we interact with every day, but it’s built on real things, in real places, maintained by real people.

The employees at these facilities — some of them with 20-plus years of tenure — represent a kind of institutional knowledge that can’t be replicated by a chatbot or a Wikipedia article. They know where every fiber run goes. They know which cage had a weird power issue three years ago. They are, in a very real and underappreciated sense, the people who keep the lights on for the rest of us.

The Future of the Internet’s Physical Layer

The internet isn’t getting lighter or more ethereal — it’s getting heavier, more physical, more dependent on buildings like the ones we’ve talked about today. A few trends worth watching:

AI Is Eating Data Center Capacity

Training and running large AI models requires enormous amounts of compute. We’re talking racks upon racks of high-end GPUs drawing kilowatts each. The cooling and power demands for AI workloads are significantly higher than traditional server workloads, which is driving a wave of new data center construction — and pushing the limits of existing facilities.

Edge Computing Is Spreading the Physical Internet

Rather than routing everything to massive central data centers, edge computing pushes smaller compute nodes closer to users — at cell towers, in neighborhoods, inside office buildings. This reduces latency for time-sensitive applications like autonomous vehicles and augmented reality.

Subsea Cables Are the International Internet

Everything we’ve talked about so far is the domestic internet. The international internet runs almost entirely on undersea fiber optic cables — physical cables laying on the ocean floor, connecting continents. There are hundreds of them, and they’re among the most critical pieces of infrastructure on the planet. When one gets damaged by a ship anchor or a seismic event, entire regions can see degraded internet performance.

Frequently Asked Questions

What exactly is a data center, and how is it different from regular server hosting?

A data center is a large facility designed specifically to house computing infrastructure — servers, storage, networking equipment — at scale. Regular server hosting might mean a company running a few servers in a back room somewhere. A colocation (colo) data center is a purpose-built facility with industrial-grade power, cooling, connectivity, and security, rented out to multiple companies who each get their own locked space. The distinction matters because a proper data center provides redundancy and reliability that a DIY setup simply can’t match.

Can anyone rent space in a facility like the Dallas Infomart or Equinix DA11?

Yes, though “anyone” comes with some caveats. These facilities have minimum commitment requirements — you’re typically renting a cage or a portion of a cabinet, signing a multi-year contract, and paying for both the space and your power draw. Smaller companies often use cloud providers instead, since colocating your own hardware only makes financial sense above a certain scale. But there’s no secret membership — if you need the connectivity and can meet the contractual requirements, you can get in.

What is “carrier neutral” and why should businesses care?

A carrier-neutral data center allows any internet service provider or network to come in and offer their services, rather than being tied exclusively to one telecom. This matters enormously for businesses because it creates competition (driving down prices), provides redundancy (you can use multiple providers), and gives you flexibility to switch providers without physically moving your equipment. Facilities controlled by a single carrier can hold you hostage on pricing and limit your options.

How does Equinix Fabric differ from just getting a regular internet connection?

A regular internet connection routes your traffic across the public internet — through countless routers and exchange points you don’t control, with variable latency and potential congestion. Equinix Fabric gives you a dedicated, software-defined private connection directly to other companies and cloud providers within Equinix’s network. It’s the difference between driving on a public highway (public internet) and having a private road directly to your destination. The latency is lower, the reliability is higher, and the bandwidth is guaranteed rather than best-effort.

Is my personal internet traffic actually going through buildings like this?

Very likely, yes — though probably not in the way you’d imagine. When you load a website or stream a video, your request travels from your device to your ISP, from your ISP to one or more internet exchange points (like the ones at the Infomart), and then to wherever the content is hosted. Your personal traffic is one of billions of packets passing through these facilities constantly. If you’re in or near Dallas, Texas, statistically there’s a good chance your data has touched one of these buildings today.

Why do data centers use blue lighting instead of normal white lights?

Part aesthetic, part functional. The original reasoning was security through obscurity: blue light makes it harder to read equipment labels, preventing someone who shouldn’t be in there from easily identifying what hardware a company is running. These days, the lighting is as much about branding and atmosphere as anything else. But the defense-in-depth philosophy behind it is sound — small layers of friction add up.

The Internet Is Physical — And That Changes How You Should Think About It

I know “the internet lives in buildings” sounds like a boring conclusion to what is actually a pretty remarkable story. But I think there’s something important in holding onto this.

Every time you feel frustrated by slow loading times, every time you hear about a massive cloud outage, every time you wonder why your video call keeps cutting out — the answer is somewhere in the physical world. Fiber under the ocean floor. Diesel generators on a roof in Dallas. A technician who’s been maintaining the same systems for 24 years.

Here’s what you can do with this knowledge:

- If you’re building a product: Think carefully about where you host it. Proximity to internet exchange points matters. Carrier neutrality matters. Redundancy matters.

- If you’re evaluating cloud providers: Ask where their data centers are. Ask about their connectivity. The marketing around “cloud” obscures the fact that you’re renting space in a building just like the ones described here.

- If you’re a security professional: Never neglect the physical layer. The most sophisticated digital security in the world can be undone by someone walking through the wrong door.

- If you’re just a curious person: The next time the internet feels like magic, remember it’s not. It’s engineering. Remarkable, painstakingly maintained, human-made engineering.

The internet is one of the most extraordinary things humans have ever built. It deserves to be understood — not just used.

Mobile App Development with React Native & Expo: A Complete Beginner’s Guide

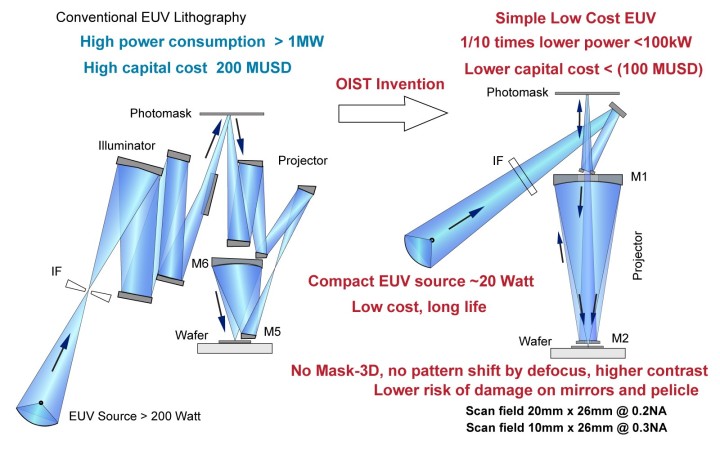

The EUV Lithography Machine That Saved Moore’s Law